Apache Spark

Apache Spark is a distributed data processing engine for large-scale computation.

Data Engineering

Data Engineering is the design and construction of systems for collecting, storing, and processing data.

Data Engineering is the design and construction of systems for collecting, storing, and processing data. On this site, it matters because it transfers across technical, operational, and venture work instead of staying trapped in one narrow context.

Learn more: https://en.wikipedia.org/wiki/Data_engineering

Apache Spark is a distributed data processing engine for large-scale computation.

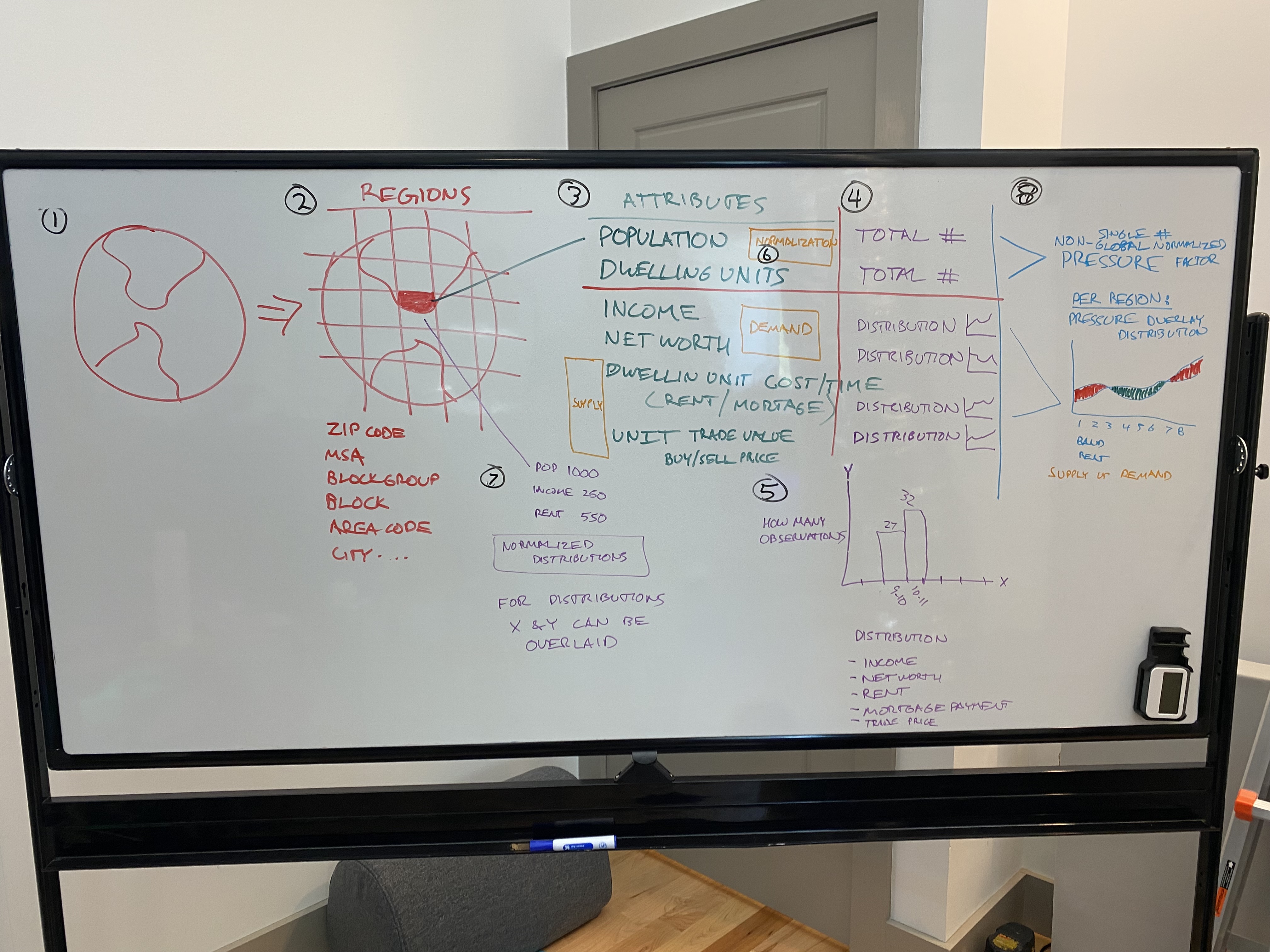

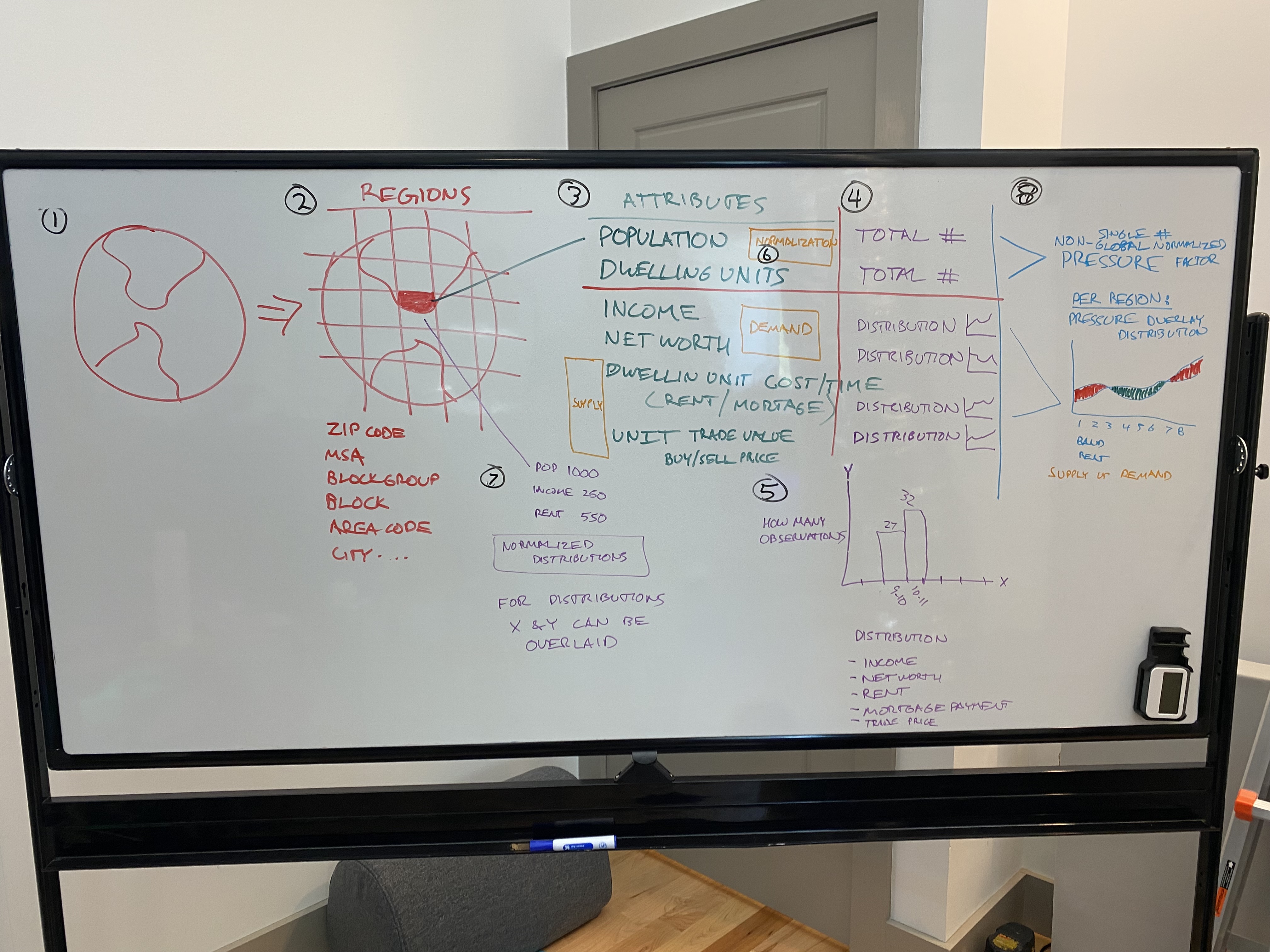

Designed a unified data model to integrate any data source into a big data geospatial analytics program, handling over 100TB of data in GCS and BigQuery with...

Projects building data pipelines, warehouses, lakes, and large-scale analytics infrastructure.

Tracking systems record the origin, transformations, and dependencies of data across pipelines and reports.

Abstract representations of entities and relationships are structured for efficient storage, querying, and interpretation.

Market structures exchange data as a product by aligning suppliers and consumers through pricing, packaging, and access controls.

Frameworks govern how data is stored, shared, and used to meet legal, contractual, and ethical expectations.

Pipelines and structures turn raw data into packaged, sellable, and repeatable products with defined schemas and use cases.

Processes and tools ensure data accuracy, completeness, and consistency through validation, monitoring, and correction.

Solutions architect role redesigning a fundamentally flawed Pentaho ETL into a scalable AWS Redshift data warehouse for a hospitality leader. Identified root...